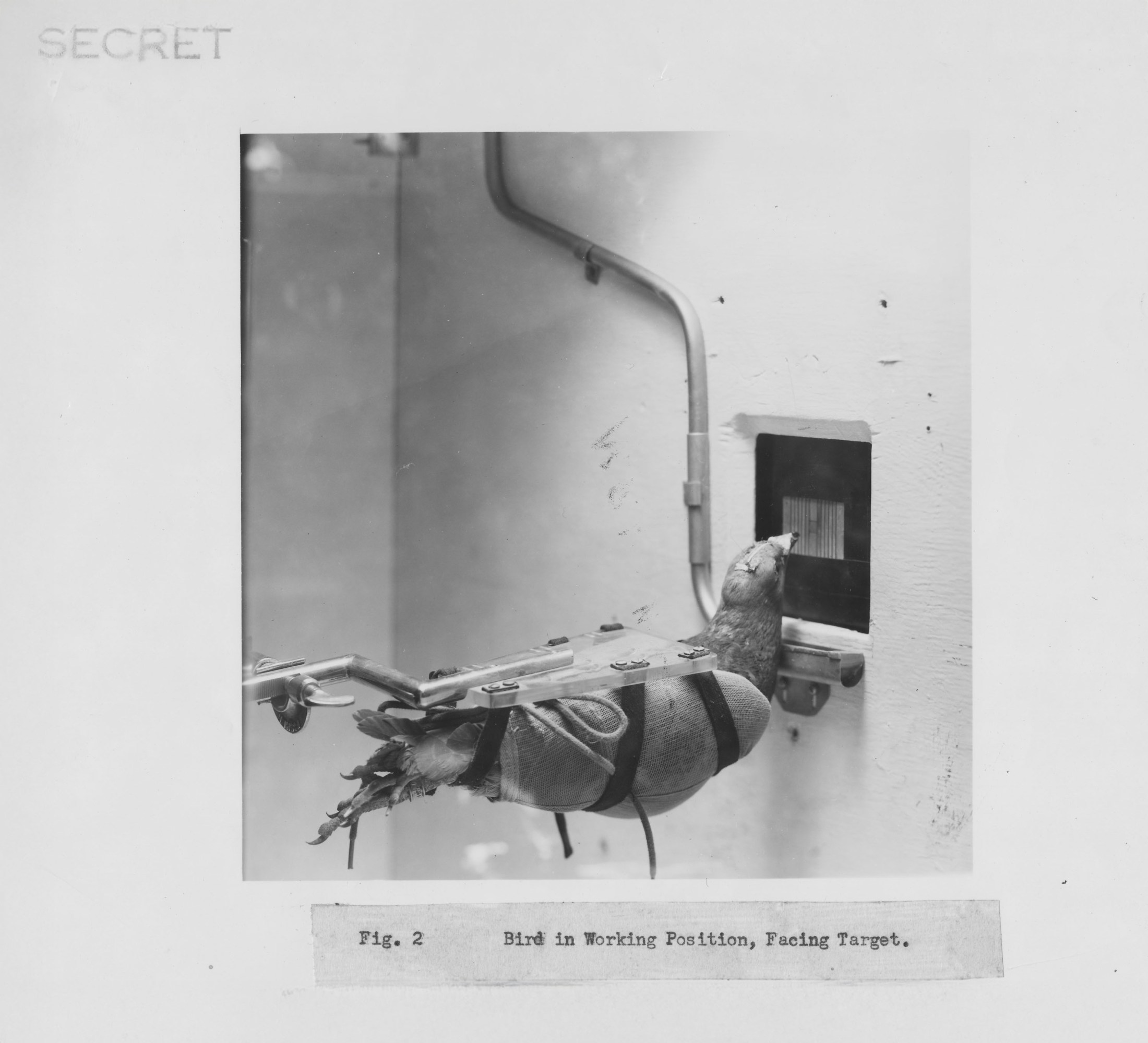

In 1943, American psychologist B.F. Skinner embarked on a secret government project during World War II, inspired by flocks of birds he saw out of a train window. Unlike the destructive aims of the Manhattan Project, Skinner’s goal was to improve bomb precision using pigeons. Initially working with crows, he found them unmanageable and turned to pigeons. Through “Project Pigeon,” he trained them to peck at targets on screens, proposing they could guide a missile. Although never implemented in warfare, Skinner’s work with pigeons informed his belief in the effectiveness of associative learning, which later influenced artificial intelligence development.

Skinner’s methods emphasized reinforcement learning, where behaviors linked to rewards are strengthened. In the mid-20th century, computer scientists like Richard Sutton and Andrew Barto adopted Skinner’s theories for AI, which rely on reinforcement learning to achieve tasks like driving cars and playing chess. These principles underpin notable AI accomplishments, including Google DeepMind’s AlphaGo Zero.

Despite shifting away from purely behaviorist views due to critiques by figures like Noam Chomsky, Skinner’s ideas persisted in AI. His work revealed that associative learning—one based on reinforcement rather than complex cognitive processes—could yield behaviors and insights previously thought exclusive to human intelligence.

Recent discussions re-evaluate associative learning’s role in animals, reacting to AI’s associative capabilities. Scientists like Johan Lind and Ed Wasserman argue that many behaviors attributed to higher cognition might stem from associative processes. They highlight that AI’s success, grounded in these same principles, suggests a broader application and understanding of intelligence, bridging artificial achievements and biological insights.